Türkçe yönetici notu: Bu makale Milad Saraf ve Datanito araştırma notlarına dayanır. Global ekiplerle çalışan profesyoneller için kurumsal bir dilde hazırlanmıştır.

İçerik, yerel dile giriş sağlamak için Türkçe bir çerçeveyle sunulur ve devamında detaylı teknik açıklama korunur.

The public narrative around AI models often swings between hype and disappointment. One month we hear that general intelligence is around the corner. The next month we hear that scaling has stalled. In reality, progress is more complex. Next generation models are improving in specific dimensions: inference speed, context reliability, multimodal fusion, tool use, and reasoning stability. At the same time, they still struggle with long horizon planning, factual consistency under ambiguity, and cost efficient deployment at scale.

From my perspective, the right way to evaluate model progress is not by headline benchmark jumps alone. We should evaluate whether a model can produce reliable decision support inside real workflows with risk controls, latency targets, and measurable business outcomes. This is where many systems still fail and where the next wave of research is focused.

Why current AI models still struggle

Today models are impressive at pattern completion but uneven at bounded reasoning under changing constraints. They can summarize large documents, generate usable code, and draft persuasive narratives, yet they often lose consistency when tasks require multi step planning, explicit memory management, or strict policy compliance over long interactions. These weaknesses are not trivial. They shape adoption ceilings in regulated and mission critical environments.

Another limitation is operational: many organizations cannot afford always on high end model usage for every workflow. Cost, latency, and reliability must be balanced. This is why model routing, smaller specialized models, and hybrid retrieval pipelines are becoming central parts of modern AI architecture.

Research and scaling laws after the first wave

Scaling laws still matter, but the industry has moved beyond simple parameter expansion. The next phase is smarter scaling: better data curation, synthetic data quality control, architecture efficiency, and training objective design that aligns with reasoning and tool use. Researchers are also focusing on post training alignment that preserves capability while improving controllability and safety.

In practical terms, the question is no longer "can we make a larger model." The question is "can we make a model that reasons better per unit of compute and deploys reliably in production." That shift is forcing tighter integration between research, infrastructure, and product teams.

AI reasoning and multimodal intelligence

Reasoning quality improves when models can combine text, code, images, and structured data in one coherent decision loop. Multimodal systems are particularly valuable in enterprise settings where documents, charts, screenshots, and logs must be interpreted together. But multimodal capability only matters when confidence handling is explicit and the model can separate evidence from speculation.

One major research direction is calibration: teaching models to express uncertainty more reliably and to escalate when confidence is weak. Another is test time planning, where models can call tools, verify intermediate steps, and revise outputs before final delivery. These improvements do not make models perfect, but they materially improve trust.

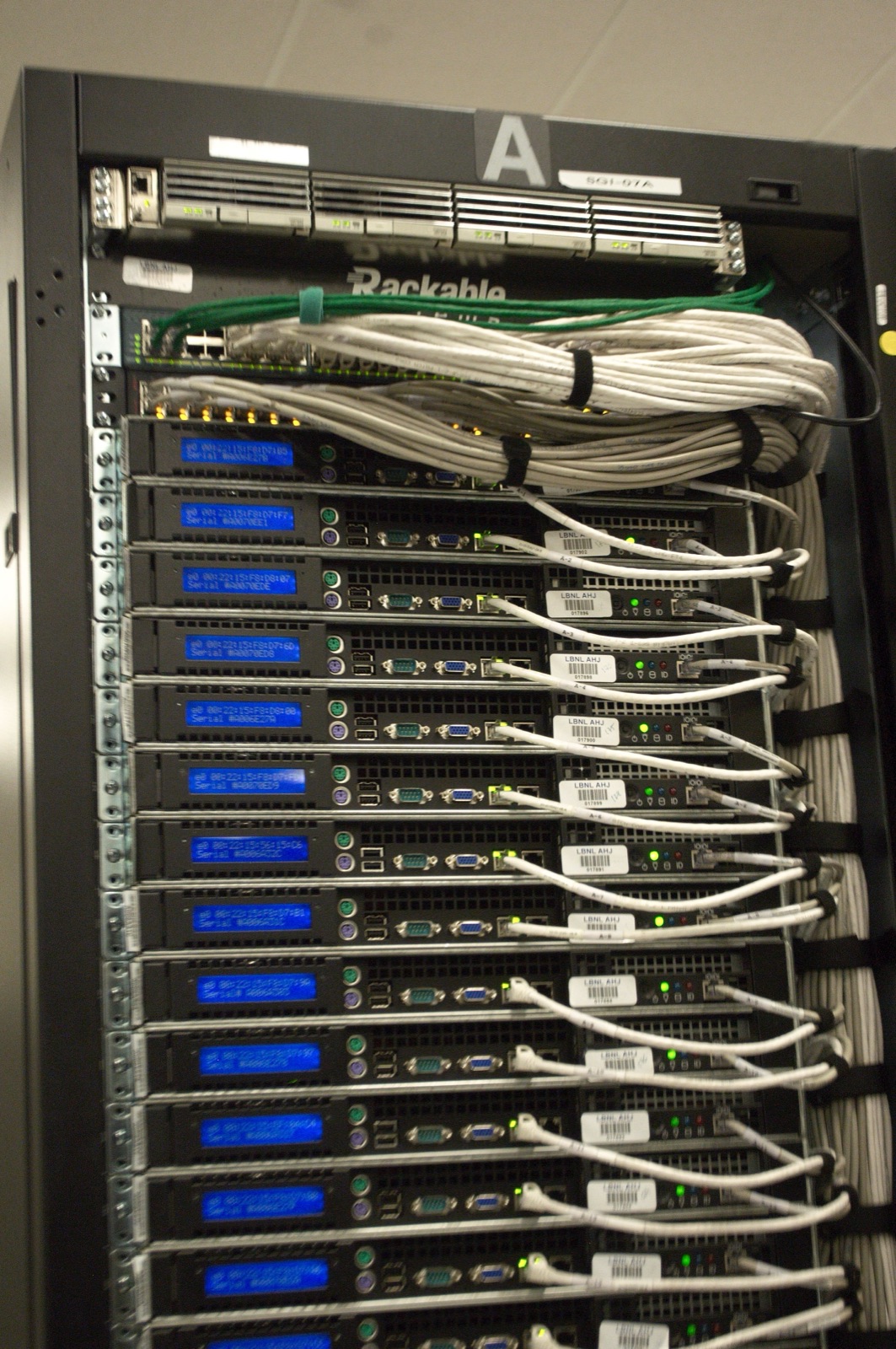

Infrastructure and training challenges

Next generation AI progress is constrained as much by infrastructure as by model ideas. Training large systems requires efficient data pipelines, high bandwidth compute fabrics, fault tolerant orchestration, and careful energy management. Inference at scale introduces a second challenge: serving reliability under variable workloads while preserving response quality and governance controls.

Organizations also face model lifecycle challenges: continuous evaluation, drift detection, prompt and retrieval versioning, and rollback safety. Without these capabilities, even a strong base model can fail operationally. The winning teams are investing in platform discipline, not just model experiments.

What systems like Quanta AI aim to solve

At Datanito, we are exploring this next phase through Quanta AI. Our focus is not on abstract model theater. It is on speed, reasoning consistency, and scalable execution in enterprise environments. Our upcoming Quanta 3 model line is designed for faster inference, deeper contextual understanding, and more reliable reasoning across complex tasks where auditability and control matter.

Quanta directionally reflects a broader market need: AI systems that can move from impressive demos to dependable operating infrastructure. That means improving both model intelligence and delivery architecture at the same time. It also means building with governance from day one.

- Improve reasoning consistency under multi step tasks.

- Lower inference latency for real time enterprise workflows.

- Increase context depth without uncontrolled cost growth.

- Strengthen confidence signaling and human escalation paths.

- Operationalize continuous evaluation across model updates.

The future of intelligence will not be defined by model size alone. It will be defined by whether systems can reason responsibly, run efficiently, and produce measurable value in complex environments. Next generation AI models are moving in that direction, but success will depend on disciplined research, infrastructure excellence, and honest evaluation standards.

Kapanış notu: Bu çerçeve, kurum içi uygulama ve ölçülebilir sonuç üretmek için kullanılacak şekilde tasarlanmıştır. Ekipler, bu yaklaşımı kendi sektör bağlamına göre uyarlayabilir.